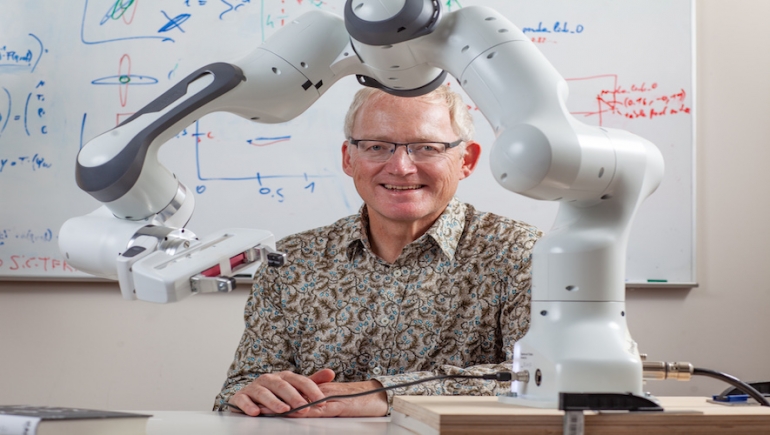

Scientia Professor Toby Walsh is a leading researcher in artificial intelligence (AI) at UNSW Sydney and CSIRO’s Data61. He has been an Associate of the Australian Human Rights Institute since day one, and advocates for managing the human rights impacts of new technologies and responsible use of AI. He has been awarded a number of prestigious as for his research and has authored two books for the general public about AI, the most recent being ‘2062: The World that AI Made’.

Professor Toby Walsh spoke to Laura Melrose about his research.

What are some of the human rights challenges presented by artificial intelligence?

Technology has the potential to produce immense good, and also immense bad. Facial recognition, for example, has been used devastatingly in China to profile and subdue the Uyghur people, while in India it has assisted with the reuniting of children who had been long separated from their parents. AI in warfare is another example. Clearing a minefield is the perfect job for a robot, but tasking machines with killing raises great legal, moral, technical and ethical challenges.

Other issues have considerable impact in our everyday lives, such as digital privacy, misuse of social media in politics, deepfakes, algorithms grading student exams, even robodebt. I’ve been working with Ed Santow, the Australian Human Rights Commissioner, on identifying the policy responses we need in Australia to deal with these challenges.

Can machines adequately protect human rights?

It’s important to remember that robots don’t have rights. However, this creates an accountability gap. Without rights, robots can’t be held accountable for their actions, but in some cases such as autonomous cars, weapons, etc. they are making decisions without sufficient human oversight.

These human rights questions are evolving alongside the technologies so we need to come up with good answers. That’s why organisations like the Australian Human Rights Institute are so important.

You’ve just been awarded an ARC Laureate Fellowship to investigate how to build AI systems that are more trustworthy. What does this research involve?

We have to embed AI systems in institutions, in society, in norms and legal frameworks. We have to think about many different issues such as trustworthiness, fairness, explicability, transparency, auditability, privacy, respect, etc., and then how to answer these from a technical perspective. But these questions don’t have just technical answers. There have many other dimensions that touch issues from areas like philosophy, sociology, anthropology, law and human rights.

One challenge is building systems that are trained on historical data, as this data captures unconscious human biases. We try to eliminate those by designing systems without their influence but this is difficult when the field is dominated by white men. We know that more diverse teams will create more inclusive, less biased product. Whatever decision an AI system makes will be biased in some way. We just have to be satisfied that the bias reflects what we want to achieve, towards the most deserving, and that society is happy with that.

These are often the same old questions we’ve been asking for centuries. But because we’re programming computers, which are frustratingly literal, one new twist is we need to be much more precise. We need to program in answers about concepts like human rights and equity.

How is AI being used in the response to COVID-19?

AI is being used throughout the whole lifecycle of the disease, from detection to designing therapies. Machines are being used to scan x-rays and identify lung damage faster and more accurately than human doctors. AI can identify patients most at risk, mine data about most effective therapies and drugs, and can assist in the development of vaccines. More controversially, it has also been used in surveillance to identify people whose temperatures are higher than normal. All in all, AI holds great promise in the improvement of healthcare.

What are the big challenges ahead in this field?

Perhaps the biggest challenge is how we address issues in this space which cross technology, philosophy and politics. This is similar to the discussion around the climate emergency. We cannot consider just the technical challenges without considering the political and social dimensions to the problem too.

Unfortunately, science is increasingly politicised. Hopefully the lesson from 2020 is how important it is to try and have science drive policy. We’ve failed at that with climate change and with the pandemic. With AI, I’m still hopeful we can address many of the challenges in advance of them hurting us.